The most popular subdomains on the internet

Published

Fuzzing is fun. But fuzzing is even more fun when you have a solid wordlist to work with. When it comes to hunting down subdomains there are a few lists out there to plug into your fuzzer, but most are small, one-shot affairs. I set out to build a list of popular subdomains which was comprehensive and could be easily kept up-to-date.

For this project I needed to get hold of DNS records. A lot of DNS records. After trying various sources, I settled upon Rapid7's Project Sonar Forward DNS data set, which includes "... regular DNS lookup for all names gathered from the other scan types, such as HTTP data, SSL Certificate names, reverse DNS records, etc". Rapid7's data set uses a really nice mix of real-world sources and is regularly updated. Perfect.

The first challenge was how to handle 1.4 billion (68 GiB) raw DNS records in a reasonable amount of time; my first attempt at processing the data took well over a week to complete on a reasonably beefy server, hardly ideal for updating frequently.

Here's the process I needed to optimise:

- Trim non-domain name data and de-dupe.

The data set contains all DNS record types (mx, txt, cname, etc), so dupes are common. - Remove suffixes using a list built from the Public Suffix List.

.com, .co.uk. .ninja, etc need to go so we can properly distinguish subdomains from domains. - Extract subdomains and tally up the number of times each occurs.

One point per subdomain per domain to keep things fair. - Sort the results by tally.

And we're done!

Optimisation

The second step, removing domain suffixes, took the most time, this part alone taking days. I spent a good while trying out different solutions, from a parallel sed with a sizeable regex to native bash processing. I eventually settled on a Python script and was able to cut the processing time right down to just under 2 hours.

Next I trained my eye on the system commands I was using. A considerable amount of time (many hours) was taken up by awk, sort, uniq and company. I did some research and with one fell swoop cut these down to mere minutes by setting the LC_ALL=C environment variable, which is perfect for working with subdomains. For more information on why this works, see Jacob Nicholson's blog post on the topic.

Selection

The next question was which part of the domain to use. Just the left-most subdomain? Explode and tally all subdomain parts? Use all of the subdomain parts as one entry? I tried the latter approach and the results were more than a little messy. I didn't find much accord in how people structure their sub-subdomains.

Going with the first option and using just the left-most subdomain I got really good results, with a clear list of winners emerging. I also found that using this list recursively covered the majority of sub-subdomain uses. Win-win. I didn't try splitting and using all subdomain parts, but I strongly suspect diminishing returns.

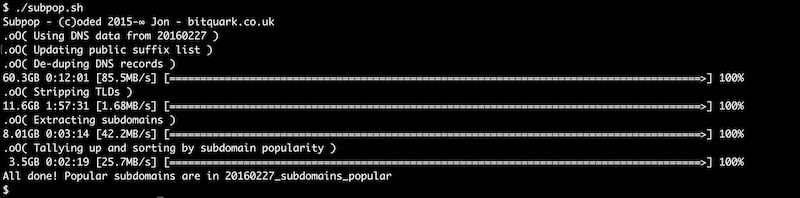

After the above optimisations the whole process takes around 2.5 hours of grunt work on a server with a 10-core (20-thread) Intel Xeon running at 3 GHz, with 48 GB of memory. Performance would likely be improved by feeding data from an SSD.

The results

Without further ado, the 50 most popular subdomains as of 27th February 2016:

| Count | Subdomain | |

|---|---|---|

| 20,395,943 | www | Obviously! |

| 1,090,647 | ||

| 258,838 | remote | Everyone loves remote access |

| 168,575 | blog | |

| 133,529 | webmail | |

| 129,202 | server | Creative |

| 100,849 | ns1 | |

| 92,737 | ns2 | |

| 73,465 | smtp | |

| 72,115 | secure | If you need a subdomain for this you have issues |

| 68,339 | vpn | |

| 63,883 | m | Lots of mobile sites |

| 62,808 | shop | |

| 60,777 | ftp | Still going strong |

| 58,484 | mail2 | |

| 44,481 | test | Well, hello |

| 44,115 | portal | |

| 43,645 | ns | |

| 43,624 | ww1 | |

| 42,235 | host | |

| 40,726 | support | |

| 40,107 | dev | Hello again |

| 37,666 | web | When www isn't enough |

| 37,345 | bbs | Yes, really. Looking into this, many people equate "BBS" with "Forum" |

| 37,131 | ww42 | Domains parked with a large domain squatter |

| 37,069 | mx | |

| 36,876 | ||

| 36,870 | cloud | Fluffy |

| 35,584 | 1 | |

| 35,481 | mail1 | |

| 34,475 | 2 | |

| 33,696 | forum | |

| 31,291 | owa | Good old Outlook |

| 31,254 | www2 | |

| 30,392 | gw | |

| 29,916 | admin | Likely a good target |

| 29,763 | store | |

| 29,251 | mx1 | |

| 29,124 | cdn | |

| 29,083 | api | |

| 28,691 | exchange | |

| 28,475 | app | |

| 26,728 | gov | Uhm |

| 26,459 | 2tty | Mostly from .asia and .pw TLDs |

| 26,229 | vps | |

| 24,964 | govyty | " |

| 24,951 | hgfgdf | " |

| 24,768 | news | |

| 24,521 | 1rer | " |

| 24,395 | lkjkui | " |

There are some predictable subdomains in the list (www, mail, ftp, etc), some more unexpected results (I didn't expect bbs to be so popular), and some results near the bottom (but which continue off the bottom of the list) such as dsasa and hgfgdf for which I haven't been able find an origin, but seem to mostly fall under the .asia and .pw top level domains. If you know anything about these, drop me a comment below.

Code

I've made the code available in the DNSpop github repo in case you want to make any changes or maintain your own list. I've also posted the most popular 1,000, 10,000, 100,000 and 1,000,000 subdomains using the latest data set. The top 1 million subdomains is probably overkill for most fuzzing applications, but the 10k and 100k lists offer pretty good coverage.

Thanks go to Stephen Haywood, who coincidentally maintains an AXFR subdomain list, for discussion around optimising the subdomain stripping process. Additional thanks to Motoko for reminding me that the pv command exists, allowing progress to be shown, and giving hope, during processing.